On 9th November, Ipsos Mori organized an interesting event looking at the relationship between customer experience and brand image. One of the results of that event is publication Great Expectations, which explores the causes, effects and changing nature of customer service expectations. We have the pleasure to publish a part from the publication that deals with measuring service expectations.

It is important to distinguish between measures of customer expectations regarding a specific service encounter and measures of overall expectations of a particular service or provider.

The prominence of expectations within many theories means that researchers have experimented with a variety of ways of measuring customer service expectations. Here, it is important to distinguish between measures of customer expectations regarding a specific service encounter and measures of overall expectations of a particular service or provider.

In general, customers find it easier to formulate and articulate expectations regarding a specific element or encounter, so using transactional level data can provide useful context. To this end, it is worth highlighting SERVQUAL, as it is one of the most commonly used frameworks that has attempted to measure customer service expectations.

Developed and refined since the 1980s, the SERVQUAL tool fits within the service quality ‘gap’ approach (Parasuraman et al. 1985) and therefore aims to improve service quality by reducing the disparity between customer expectations and perceptions. The SERVQUAL questionnaire is organised around a group of factors that the researchers saw as being most important in influencing service quality (a later revision of the framework defined these as Reliability, Assurance, Tangibles, Empathy and Responsiveness).

Each of these factors can be expanded into a larger set of service attributes, which are reflected in a battery of 22 questions. The most basic SERVQUAL analysis involves asking consumers to:

- Rate the level of service of an excellent provider for a variety of attributes

- Rate how a particular service provider performs for each attribute

In this model, asking customers to provide a score for an excellent service is thought to provide some measure of service expectations.

We can see that two thirds of positive public sector experiences exceeded customer expectations.

As a service quality improvement tool, SERVQUAL has been widely criticised for a number of flaws, including the fact that asking customers about ‘excellent service’ often results in uniformly high levels of stated expectations. However, researchers have attempted to reduce this weakness through adapting these questions (for example through asking consumers to also rate their expectations of an ‘adequate’ provider).

The tool has also been criticised on the basis that it expects respondents to rate absolute scores for service expectations and perceptions expost – meaning that the expectations scores provided do not necessarily reflect the level of expectations at the time of the encounter (Gronroos, 1993). Given the practical difficulties of asking respondents to provide expectation scores immediately before a service encounter, one proposed solution has been to ask respondents directly how a particular attribute performed relative to expectations (Carman, 1990).

Despite these and other criticisms, SERVQUAL has been applied widely to a diverse range of sectors such as banking, tourism, airlines and hospitals. A similar tool called the Common Measurements Tool (CMT) has been tailored to better fit public sector service requirements. However, CMT adapts many of the concepts present in standard SERVQUAL analysis. Ultimately elements of the SERVQUAL approach could provide useful insights when trying to explore service expectations.

In a new study carried out by Ipsos MORI, we tried to measure expectations across a variety of sectors and to identify drivers of positive and negative experiences. In this case we asked customers to recall recent experiences with service providers, directly asked them how the experience compared to expectations and asked why the interaction exceeded or failed to meet expectations.

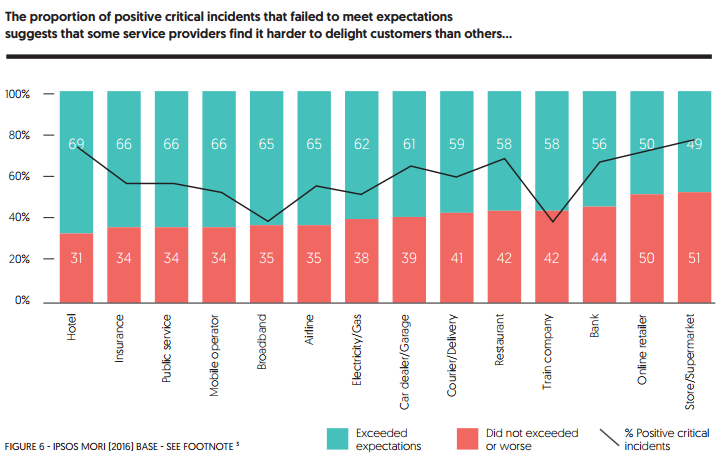

This data can provide us with some insight into the varying levels of expectations across different sectors:

Experiences: Hotel (n=552), Insurance (n=149), Public service (n=210), Mobile operator (n=401), Broadband (n=267), Airline (n=325), Electricity/Gas (n=149), Car Dealer/Garage (n=167), Courier/ Delivery (n=183), Restaurant (n=218), Train company (n=104), Bank (n=518), Online retailer (n=396), Store/supermarket (n=345)

Here, focusing on positive experiences that failed to exceed customer expectations might provide some proxy of the level of expectations– as sectors that deliver positive experiences but often fail to exceed expectations could be seen to face more demanding customers.

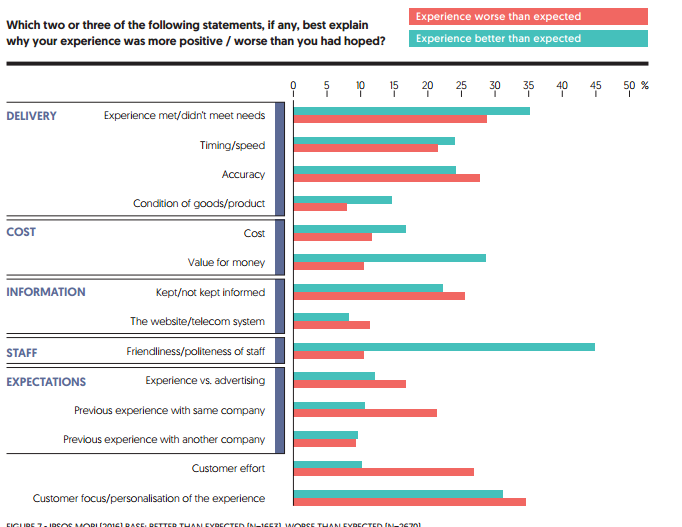

We can see that two thirds of positive public sector experiences exceeded customer expectations – this is in comparison with online retailers and high street stores that were only able to exceed their customer expectations in 50% of positive interactions. Consistent with previous research, our data also shows customers vary in their explanations of what makes an experience better or worse than expected:

There are 3 groups of reasons shown below that are worth highlighting:

Satisfiers

Friendliness/politeness of staff and value for money were frequently cited by customers who felt that their service encounter was better than their expectations but were mentioned less frequently by customers who had experiences that were worse than expected.

Dissatisfiers

Previous experiences with the same company tended to be mentioned more often by customers who had an encounter that was worse than expected. Customers having to put in more effort during an encounter was also a key dissatisfier.

Dual Threshold Factors

Five factors came out strongly as both a satisfier and a dissatisfier. The importance of the final service outcome is one of the most mentioned of all, and is a useful reminder that this should not be forgotten alongside softer service aspects. Also important (and which has been seen in previous work) is the importance of information provision, accuracy and timeliness. Customer focus/personalisation (or a lack of it) is a service factor that has not been as widely discussed in previous research but was mentioned by both customers whose experience had exceeded expectations and those for whom the experience had failed to meet expectations. This might be an example of how customer expectations have changed over years in response to the changing service they receive.

Interesting links:

- Download the full publication Great Expectations

- CX expectations – Does age really matter?

- How to keep up with customer expectations when moving into bricks and mortar